The Market for Legal Writing and Clinical Professors

Why do professors who teach legal writing and clinics earn significantly less than professors who teach other courses? Why are the writing and clinical professors less likely to hold tenure-track status? And why, finally, are these lower-paid, lower-status professors disproportionately female?

A common answer is: the market. Applicants for legal writing and clinical positions are plentiful, the argument goes, so the market drives their salaries and status down. Professors who teach other courses are more scarce and have more lucrative options; law schools must pay more (and offer tenure-track status) to attract them. Law schools also demand scholarship from professors teaching those other courses, and the pool of people capable of outstanding scholarship and good teaching is very small indeed. Salaries and status must be generous to land those rare individuals–but not so generous for legal writing and clinical professors.

This explanation (which I’ll call the “market hypothesis”) has some initial appeal, but thoughtful examination reveals several flaws. The most striking defect is this one: The market hypothesis doesn’t explain the very high percentage of women teaching legal writing and clinical courses. 62% of the faculty teaching clinics or externship courses identify as women; 72% of those who teach legal writing do so. The pool of law school graduates, in contrast, includes roughly equal numbers of men and women. So why don’t the hiring nets for clinical and legal writing positions pull up a more equal number of male and female professors?

If the market hypothesis is correct, it has to explain why an abundant applicant pool yields such gendered results. I explore below four ways in which the market hypothesis might coexist with our disproportionately female writing and clinical faculties.

» Read the full text for The Market for Legal Writing and Clinical Professors

Jobs and Salaries for New Lawyers

What does the job market look like for new lawyers? The ABA will soon release statistics about the Class of 2016, and NALP will add additional information by the end of the summer. But the Bureau of Labor Statistics (BLS) gives us an advance peak.

Each year, BLS reports job numbers and salaries for a wide range of occupations. This series of reports includes only salaried positions; for the legal profession, the series omits both solo practitioners and equity partners in law firms. Still, since most new graduates seek salaried positions, these numbers offer a useful measure of the profession’s ability to absorb and pay new members.

The Seventeen Percent

In a recent column, Professor Stephen Davidoff Solomon observes that the legal job market “is a world of haves and have-nots.” With BigLaw firms raising entry-level salaries from $160,000 to $180,000, he concludes, “[t]op law graduates are doing better than ever.” Conversely, “it is clear that it is harder out there for the lower-tier law schools and their graduates.”

I agree with Professor Solomon about the divided nature of our profession; that reality has haunted American lawyers for decades. Solomon, however, significantly overstates the percentage of law graduates who fall within his world of “haves” (those whose salaries recently climbed from $160,000 to $180,000).

Did Firms Raise Salaries High Enough?

Originally published on Above The Law.

Deborah Merritt, a law professor at the Ohio State University, published an informative analysis on her blog yesterday about the new market rate salary for large law firms, which has been extensively covered here on ATL.

Deborah Merritt, a law professor at the Ohio State University, published an informative analysis on her blog yesterday about the new market rate salary for large law firms, which has been extensively covered here on ATL.

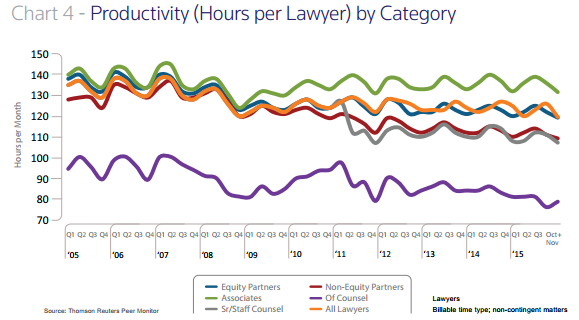

To her and virtually every other observer, the increase to $180,000 signals that many large firms are prospering. In part the increase reflects a small but steady increase in associate productivity since 2008, reaching roughly the levels from the last market rate increase in 2007. The following chart is from the 2016 Report on the State of the Legal Market, issued by Georgetown Law’s Center for the Study of the Legal Profession:

Associates are continuously more productive by this measure than any other category of worker, although at lower billable rates than partners. Interestingly, the gap in productivity between associates and other groups is significantly greater post-recession.

(more…)

» Read the full text for Did Firms Raise Salaries High Enough?

$180,000

BigLaw firms gave 2016 graduates a sweet gift earlier this month: new associates at many of those firms will earn $180,000 (rather than $160,000) when they start work in the fall. That’s the first salary increase in BigLaw since 2007.

What should we make of this increase? It shows, certainly, that many BigLaw firms continue to prosper. But we already knew that from the firms’ reports of profits per partner. We also knew that associates are the most productive workers at those firms. This raise reflects rather belated recognition of that fact.

One could argue, in fact, that BigLaw partners are still undervaluing their associates. As Bruce MacEwen notes, the increase doesn’t match inflation since the last increase in BigLaw salaries. $180,000 in 2016 has less buying power than $160,000 did in 2007.

But those kids are going to be alright. I want to focus here on a shadow side of the BigLaw salary increase, one that the press and blogs haven’t discussed. BigLaw firms are paying more money–but to many fewer associates. This trend, which concentrates higher salaries in a smaller number of workers, has important implications for the legal job market.

Doctors, Lawyers & Software Developers

I wrote earlier this week about employment trends for doctors and lawyers. There is a third occupation that now vies with these professions for the affections of talented college graduates: software developer. Examining this occupation explains where some might-have-been lawyers are headed.

What Is a Software Developer?

Software developers, who are also called software engineers, are not programmers. They have a deep understanding of code, and know how to program, but that is not their primary focus. Instead, developers design the programs that give us so much delight–and occasional frustration. The developers also test programs to try to forestall that frustration and, when glitches happen, work with the programmers to fix the errant program.

Once you understand the nature of software development, you can see it’s attractions for students who might also consider law school. Software developers use their intellects, solve puzzles, and help people. They know more math than the typical lawyer, but their work focuses on logic and strategy rather than equations.

Add in these facts: It’s pretty cool to develop “apps,” many software companies are hip places to work, and you could become famous (and very rich) creating the next big program.

» Read the full text for Doctors, Lawyers & Software Developers

Professional Salaries

How much should a professional worker earn? The Department of Labor (DOL) recently decided that salaried professionals who work full-time should earn at least $47,476 per year. Under the Department’s new overtime rules, salaried workers earning less than that amount will be entitled to overtime pay for extra hours. A real professional, in DOL’s eyes, earns at least $913 per week–or $47,476 for a year of full-time work.

Hold your excitement: This salary test will not apply to lawyers, because DOL counts lawyers as professionals no matter how little they earn. See 29 CFR 541.600(e); 29 CFR 29 C.F.R. 541.304. Employers are free to continue working their lawyers for long hours and low pay. It’s worth considering, however, what this rule tells us about societal expectations for professional pay. If professionals earn at least $47,476 per year, how do lawyers stack up?

Overpromising

Earlier this week, I wrote about the progress that law schools have made in reporting helpful employment statistics. The National Association for Law Placement (NALP), unfortunately, has not made that type of progress. On Wednesday, NALP issued a press release that will confuse most readers; mislead many; and ultimately hurt law schools, prospective students, and the profession. It’s the muddled, the false, and the damaging.

The Muddled

Much of the press release discusses the status of $160,000 salaries for new lawyers. This discussion vacillates between good news (for the minority of graduates who might get these salaries) and bad news. On the one hand, the $160,000 starting salary still exists. On the other hand, the rate hasn’t increased since 2007, producing a decline of 11.7% in real dollars (although NALP doesn’t spell that out).

On the bright side, the percentage of large firm offices paying this salary has increased from 27% in 2014 to 39% this year. On the down side, that percentage still doesn’t approach the two-thirds of large-firm offices that paid $160,000 in 2009. It also looks like the percentage of offices offering $160,000 to this fall’s associates (“just over one-third”) will be slightly lower than the current percentage.

None of this discussion tells us very much. This NALP survey focused on law firms, not individuals, and it tabulated results by office rather than firm. The fact that 39% of offices associated with the largest law firms are paying $160,000 doesn’t tell us how many individuals are earning that salary (let alone what percentage of law school graduates are doing so). And, since NALP has changed its definition of the largest firms since 2009, it’s hard to know what to make of comparisons with previous years.

In the end, all we know is that some new lawyers are earning $160,000–a fact that has been true since 2007. We also know that this salary must be very, very important because NALP repeats the figure (“$160,000”) thirty-two times in a single press release.

The False

In a bolded heading, NALP tells us that its “Data Represent Broad-Based Reporting.” This is so far off the mark that it’s not even “misleading.” It’s downright false. As the press release notes, only 5% of the firms responding to the survey employed 50 lawyers or fewer. (The accompanying table suggests that the true percentage was just 3.5%, but I won’t quibble over that.)

That’s a laughable representation of small law firms, and NALP knows it. Last year, NALP reported that 57.5% of graduates who took jobs with law firms went to firms of 50 lawyers or less. Smaller firms tend to hire fewer associates than large ones, and they don’t hire at all in some years. The percentage of “small” firms (those with 50 or fewer lawyers) in the United States undoubtedly is greater than 57.5%–and not anywhere near 5%.

NALP’s false statements go beyond a single heading. The press release specifically assures readers that “The report thus sheds light on the breadth of salary differentials among law firms of varying sizes and in a wide range of geographic areas nationwide, from the largest metropolitan areas to much smaller cities.” I don’t know how anyone can make that claim with a straight face, given the lack of response from law firms that make up the majority of firms nationwide.

This would be simply absurd, except NALP also tells readers that “the overall national median first-year salary at firms of all sizes was $135,000,” and that the median for the smallest firms (those with 50 or fewer lawyers) was $121,500. There is some fuzzy language about the median moving up during the last year because of “relatively fewer responses from smaller firms,” but that refers simply to the incremental change. Last year’s survey was almost as distorted as this year’s, with just 9.8% of responses coming from firms with 50 or fewer lawyers.

More worrisome, there’s no caveat at all attached to the representation that the median starting salary in the smallest law firms is $121,500. If you think that the 16 responding firms in this category magically represented salaries of all firms with 50 or fewer lawyers, see below. Presentation of the data in this press release as “broad-based” and “shed[ding] light on the breadth of salary differentials” is just breathtakingly false.

The Damaging

NALP’s false statements damage almost everyone related to the legal profession. The media have reported some of the figures from the press release, and the public response is withering. Clients assume that firms must be bilking them; otherwise, how could so many law firms pay new lawyers so much? Remember that this survey claims a median starting salary of $121,500 even at the smallest firms. Would you approach a law firm to draft your will or handle your divorce if you thought your fees would have to support that type of salary for a brand-new lawyer?

Prospective students will also be hurt if they act on NALP’s misrepresentations. Why shouldn’t they believe an organization called the “National Association for Law Placement,” especially when the organization represents its data as “broad-based”?

Ironically, though, law schools may suffer the most. What happens when prospective students compare NALP’s pumped-up figures with the ones on most of our websites? Nationwide, the median salary for 2013 graduates working in firms of 2-10 lawyers was just $50,000. So far, reports about the Class of 2014 look comparable. (As I’ve explained before, the medians that NALP reports for small firms are probably overstated. But let’s go with the reported median for now.)

When prospective students look at most law school websites, they’re going to see that $50,000 median (or one close to it) for small firms. They’re also going to see that a lot of our graduates work in those small firms of 2-10 lawyers. Nationwide, 8,087 members of the Class of 2013 took a job with one of those firms. That’s twice as many small firm jobs as ones at firms employing 500+ lawyers (which hired 3,980 members of the Class of 2013).

How do we explain the fact that so many of our graduates work at small firms, when NALP claims that these firms represent such a small percentage of practice? And how do we explain that our graduates average only $50,000 in these small-firm jobs, while NALP reports a median of $121,500? And then how do we explain the small number of our graduates who earn this widely discussed salary of $160,000?

With figures like $160,000 and $121,500 dancing in their heads, prospective students will conclude that most law schools are losers. By “most” I mean the 90% of us who fall outside the top twenty schools. Why would a student attend a school that offers outcomes so inferior to ones reported by NALP?

Even if these prospective students have read scholarly analyses showing the historic value of a law degree, they’re going to worry about getting stuck with a lemon school. And compared to the “broad-based” salaries reported by NALP, most of us look pretty sour.

Law schools need to do two things. First, we need to stop NALP from making false statements–or even just badly skewed ones. Each of our institutions pays almost $1,000 per year for this type of reporting. We shouldn’t support an organization that engages in such deceptive statements.

Second, we really do need to stop talking about BigLaw and $160,000 salaries. If Michael Simkovic and Frank McIntyre are correct about the lifetime value of a law degree, then we should be able to illustrate that value with real careers and real salaries. What do prosecutors earn compared to other government workers, both entry-level and after 20 years of experience? How much of a premium do businesses pay for a compliance officer with a JD? We should be able to generate answers to those questions. If the answers are positive, and we can place students in the appropriate jobs, we’ll have no trouble recruiting applicants.

If the answers are negative, we need to know that as well. We need to figure out the value of our degree, for our students. Let’s get real. Stop NALP from disseminating falsehoods, stop talking about $16*,*** salaries, and start talking about outcomes we can deliver.

Old Ways, New Ways

For the last two weeks, Michael Simkovic and I have been discussing the manner in which law schools used to publish employment and salary information. The discussion started here and continued on both that blog and this one. The debate, unfortunately, seems to have confused some readers because of its historical nature. Let’s clear up that confusion: We were discussing practices that, for the most part, ended four or five years ago.

Responding to both external criticism and internal reflection, today’s law schools publish a wealth of data about their employment outcomes; most of that information is both user-friendly and accurate. Here’s a brief tour of what data are available today and what the future might still hold.

ABA Reports

For starters, all schools now post a standard ABA form that tabulates jobs in a variety of categories. The ABA also provides this information on a website that includes a summary sheet for each school and a spreadsheet compiling data from all of the ABA-accredited schools. Data are available for classes going back to 2010; the 2014 data will appear shortly (and are already available on many school sites).

Salary Specifics

The ABA form does not include salary data, and the organization warns schools to “take special care” when reporting salaries because “salary data can so easily be misleading.” Schools seem to take one of two approaches when discussing salary data today.

Some provide almost no information, noting that salaries vary widely. Others post their “NALP Report” or tables drawn directly from that report. What is this report? It’s a collection of data that law schools have been gathering for about forty years, but not disclosing publicly until the last five. The NALP Report for each school summarizes the salary data that the school has gathered from graduates and other sources. You can find examples by googling “NALP Report” along with the name of a law school. NALP reports are available later in the year than ABA ones; you won’t find any 2014 NALP Reports until early summer.

NALP’s data gathering process is far from perfect, as both Professor Simkovic and I have discussed. The report for each school, however, has the virtue of both providing some salary information and displaying the limits of that information. The reports, for example, detail how many salaries were gathered in each employment category. If a law school reports salaries for 19/20 graduates working for large firms, but just 5/30 grads working in very small firms, a reader can make note of that fact. Readers also get a more complete picture of how salaries differ between the public and private sector, as well as within subsets of those groups.

Before 2010, no law school shared its NALP Report publicly. Instead, many schools chose a few summary statistics to disclose. A common approach was to publish the median salary for a particular law school class, without further information about the process of obtaining salary information, the percentage of salaries gathered, or the mix of jobs contributing to the median. If more specific information made salaries look better, schools could (and did) provide that information. A school that placed a lot of graduates in judicial clerkships, government jobs, or public interest positions, for example, often would report separate medians for those categories–along with the higher median for the private sector. Schools had a lot of discretion to choose the most pleasing summary statistic, because no one reported more detailed data.

Given the brevity of reported salary data, together with the potential for these summary figures to mislead, the nonprofit organization Law School Transparency (LST) began urging schools to publish their “full” NALP Reports. “Full” did not mean the entire report, which can be quite lengthy and repetitive. Instead, LST defined the portions of the report that prospective students and others would find helpful. Schools seem to agree with LST’s definition, publishing those portions of the report when they choose to disclose the information.

Today, according to LST’s tracking efforts, at least half of law schools publish their NALP Reports. There may be even more schools that do so; although LST invites ongoing communication with law schools, the schools don’t always choose to update their status for the LST site.

Plus More

The ABA’s standardized employment form, together with greater availability of NALP Reports, has greatly changed the information available to potential law students and other interested parties. But the information doesn’t stop with these somewhat dry forms. Many law schools have built upon these reports to convey other useful information about their graduates’ careers. Although I have not made an exhaustive review, the contemporary information I’ve seen seems to comply with our obligation to provide information that is “complete, accurate and not misleading to a reasonable law school student or applicant.”

In addition to these efforts by individual schools, the ABA has created two websites with consumer information about law schools: the employment site noted above and a second site with other data regularly reported to the ABA. NALP has also increased the amount of data it releases publicly without charge. LST, finally, has become a key source for prospective students who want to sort and compare data drawn from all of these sources. LST has also launched a new series of podcasts that complement the data with a more detailed look at the wide range of lawyers’ work.

Looking Forward

There’s still more, of course, that organizations could do to gather and disseminate data about legal careers. I like Professor Simkovic’s suggestion that the Census Bureau expand the Current Population Survey and American Community Survey to include more detailed information about graduate education. These surveys were developed when graduate education was relatively uncommon; now that post-baccalaureate degrees are more common, it seems critical to have more rigorous data about those degrees.

I also hope that some scholars will want to gather data from bar records and other online sources, as I have done. This method has limits, but so do larger initiatives like After the JD. Because of their scale and expense, those large projects are difficult to maintain–and without regular maintenance, much of their utility falls.

Even with projects like these, however, law schools undoubtedly will continue to collect and publish data about their own employment outcomes. Our institutions compete for students, US News rank, and other types of recognition. Competition begets marketing, and marketing can lead to overstatements. The burden will remain on all of us to maintain professional standards of “complete, accurate and not misleading” information, even as we talk with pride about our schools. Our graduates face similar obligations when they compete for clients. Although all of us chafe occasionally at duties, they are also the mark of our status as professionals.

Compared to What?

Some legal educators have a New Yorker’s view of the world. Like the parochial Manhattanite in Saul Steinberg’s famous illustration, these educators don’t see much beyond their own fiefdom. They see law graduates out there in the world, practicing their profession or working in related fields. And there are doctors, who (regrettably) make more money than lawyers do. But really, what else is there? What do people do if they don’t go to law school?

Michael Simkovic takes this position in a recent post, declaring (in bold) that: “The question everyone who decides not to go to law school . . . must answer is–what else out there is better?” In a footnote, Simkovic concedes that “[a]nother graduate degree might be better than law school for a particular individual,” but he clearly doesn’t think much of the idea.

People, of course, work in hundreds of occupations other than law. Some of them even enjoy their work. Simkovic’s concern lies primarily with the financial return on college and graduate degrees. Even here, though, the contemporary options are much broader than many legal educators realize.

Time Was: The 1990s

Financially, the late twentieth century was a good time to be a lawyer. When the Bureau of Labor Statistics (BLS) published its first Occupational Employment Statistics (OES) in 1997, the four occupations with the highest salaries were medicine, dentistry, podiatry, and law. Those four occupations topped the salary list (in that order) whether sorted by mean or median salary. [Note that OES collects data only on salaries; it does not include self-employed individuals like solo practitioners or partners–whether in law or medicine. For more on that point, see the end of this post.]

Law was a pretty good deal in those days. The graduate program was just three years, rather than four. There were no college prerequisites and no post-graduate internships. Knowledge of math was optional, and exposure to bodily fluids minimal. Imagine earning a median salary of $109,987 (in 2014 dollars) without having to examine feet! Although a willingness to spend four years of graduate school studying feet, along with a lifetime of treating them, would have netted you a 28% increase in median salary.

But let’s not dally any longer in the twentieth century.

Time Is: 2014

BLS just released its latest survey of occupational wages, and the results show how much the economy has changed. Law practice has slipped to twenty-second place in a listing of occupations by mean salary, and twenty-sixth place when ranked by median. One subset of lawyers, judges and magistrates, holds twenty-fifth place on the list of median salaries, but practicing lawyers have slipped a notch lower.

About half the slippage in law’s salary prominence stems from the splintering of medical occupations, both in the real world and as measured by BLS. We no longer visit “doctors,” we see pediatricians, general practitioners, internists, obstetricians, anesthesiologists, surgeons, and psychiatrists–often in that order. These medical specialists, along with the dentists and podiatrists, all enjoy a higher median salary than lawyers.

There are two other health-related professions, meanwhile, that have moved ahead of lawyers in wages: nurse anesthetists and pharmacists. Both of these fields require substantial graduate education: at least two years for nurse anesthetists and two to four years for pharmacists. But the training pays off with a median salary of $153,780 for nurse anesthetists and $120,950 for pharmacists.

Today’s college graduates, furthermore, don’t have to deal with teeth, airways, or medications to earn more than lawyers do. The latest BLS survey includes nine other occupations that top lawyers’ median salary: financial managers, airline pilots, natural sciences managers, air traffic controllers, marketing managers, computer and information systems managers, petroleum engineers, architectural and engineering managers, and chief executives.

How much do salaried lawyers earn in their more humble berth on the OES list? They collected a median salary of $114,970 in 2014. That’s good, but it’s only 4.5% higher (in inflation-controlled dollars) than the median salary in 1997. Pharmacists enjoyed a whopping 28% increase in median real wages to reach $120,950 in 2014. And the average nurse anesthetist earned a full third more than the average lawyer that year.

If you’re a college student willing to set your financial sights just a bit lower than the median salary in law practice, there are lots of other options. Here are some of the occupations with a 2014 median salary falling between $100,000 and $114,970: sales manager, physicist, computer hardware engineer, computer and information research scientist, compensation and benefits manager, purchasing manager, astronomer, aerospace engineer, political scientist, mathematician, software developer for systems software, human resources manager, training and development manager, public relations and fundraising manager, optometrist, nuclear engineer, and prosthodontist (those are the folks who will soon be fitting baby boomers for their false teeth).

Law graduates could apply their education to some of these jobs; with a few more years of graduate education, a savvy lawyer could offer the aging boomers a package deal on a will and a new pair of choppers. But the most common themes in these salary-leading occupations do not revolve around law. Instead, the themes are math, science, and management–none of which we teach very well in law school.

Twenty-first Century Humility

Lawyers will not disappear. Even Richard Susskind, who asked about “The End of Lawyers?” in a provocative book title, doesn’t think lawyers are done for. We still need lawyers to fill both traditional roles and new ones. Lawyers, however, will not have the same economic and social dominance that they enjoyed in the late twentieth century.

Some lawyers will still make a lot of money. As the American Lawyer proclaimed last year, the “super rich” are getting richer. But the prospects for other lawyers are less certain, and the appeal of competing fields has increased.

If law schools want to understand their decline in talented applicants, they need to look more closely at the competition. What do today’s high school students and middle schoolers think about law? Those students will choose their majors soon after arriving at college. Once they choose engineering, computer science, business, or health-related courses, a legal career will seem even less appealing. If we want potential students to find law attractive, we need to know more about their alternatives and preferences.

We also need to be realistic about how many students ultimately will–or should–pursue a law degree. As citizens of a healthy economy, we need doctors, nurse anesthetists, pharmacists, managers, and software developers. We even need the odd astronomer or two. Law is just one of the many occupations that make a society thrive. The twenty-first century is a time of interdependence that should bring a sense of humility.

Notes

Here are some key points about the method behind the OES survey. For more information, see this FAQ page, which includes the information I summarize here:

1. OES obtains wage data directly from establishments. This method eliminates bias that may occur when individuals report their own wages. The survey, however, includes only wage data for salaried employees. Solo practitioners (in any field) are excluded, as are individuals who draw their income entirely from partnerships or other forms of profit sharing.

2. “Wages” include production bonuses and tips, but not end-of-year bonuses, profit-sharing, or benefits.

3. Although BLS publishes OES data every year, the data are gathered on a rolling basis. Income for “1997” or “2014” reflects data gathered over three years, including the reference year. BLS adjusts wage figures for the two older years, using the Employment Cost Index, so the reported wages appear in then “current” dollars. The three-year collection period, however, can mask sudden shifts in employment trends.

4. BLS cautions against using OES data to compare changes in employment data over time, unless the user offers necessary context. In particular, it is important for readers to understand that short-term comparisons are difficult (because of the point in the previous paragraph) and that occupational categories change frequently. For those reasons, I have limited my cross-time comparisons and have noted the splintering of occupational categories. The limited comparison offered here, however, seems helpful in understanding the relationship of law practice to other high-paying occupations.

5. For the data used in this post, follow this link and download the spreadsheets. The HTML versions are prettier, but they do not include all of the data.

About Law School Cafe

Cafe Manager & Co-Moderator

Deborah J. Merritt

Cafe Designer & Co-Moderator

Kyle McEntee

Law School Cafe is a resource for anyone interested in changes in legal education and the legal profession.

Law School Cafe is a resource for anyone interested in changes in legal education and the legal profession.

Around the Cafe

Subscribe

Categories

Recent Comments

- on Scholarship Advice

- on ExamSoft: New Evidence from NCBE

- on COVID-19 and the Bar Exam

- on Women Law Students: Still Not Equal

- on Ranking Academic Impact

Recent Posts

- The Bot Takes a Bow

- Fundamental Legal Concepts and Principles

- Lay Down the Law

- The Bot Updates the Bar Exam

- GPT-4 Beats the Bar Exam

Monthly Archives

Participate

Have something you think our audience would like to hear about? Interested in writing one or more guest posts? Send an email to the cafe manager at merritt52@gmail.com. We are interested in publishing posts from practitioners, students, faculty, and industry professionals.