Corrected Post on July Exam Results

[To replace my post from yesterday, which misreported Oklahoma’s pass rate]

States have started to release results from the July 2015 bar exam. So far the results I have seen are mixed:

Iowa’s first-time takers enjoyed a significant increase in the pass rate, from 82% in July 2014 to 91% in July 2015. (I draw all 2014 statistics in this post from NCBE data).

New Mexico’s first-timers, on the other hand, suffered a substantial decline in their pass rate: 88% passed in July 2014 while just 76% did in July 2015.

In Missouri, the pass rate for first-timers fell slightly, from 88% in July 2014 to 87% in July 2015.

Two other states have released statistics for all test-takers, without identifying first-timers. In one of those, Oklahoma, the pass rate fell substantially–from 79% to 68%. In the other, Washington state, the rate was relatively stable at 77% in July 2014 and 76% in 2015.

A few other states have released individual results, but have not yet published pass rates. It may be possible to calculate overall pass rates in those states, but I haven’t tried to do so; first-time pass rates provide a more reliable year-to-year measure, so it is worth waiting for those.

Predictions

I suggest that bar results will continue to be mixed, due to three cross-cutting factors:

1. The July 2015 exam was not marred by the ExamSoft debacle. This factor will push 2015 passing rates up above 2014 ones.

2. The July 2015 MBE includes seven subjects rather than six. The more difficult exam will push passing rates down for 2015.

3. The qualifications of examinees, as measured by their entering LSAT scores, declined between the Class of 2014 and Class of 2015. This factor will also push passing rates down.

Overall, I expect pass rates to decline between July 2014 and July 2015; the second and third factors are strong ones. A contrary trend in a few states like Iowa, however, may underscore the effects of last year’s ExamSoft crisis. Once all results are available, more detailed analysis may show the relative influence of the three factors listed above.

Student Body, Bar Exam, ExamSoft View Comments (2)ExamSoft: New Evidence from NCBE

Almost a year has passed since the ill-fated July 2014 bar exam. As we approach that anniversary, the National Conference of Bar Examiners (NCBE) has offered a welcome update.

Mark Albanese, the organization’s Director of Testing and Research, recently acknowledged that: “The software used by many jurisdictions to allow their examinees to complete the written portion of the bar examination by computer experienced a glitch that could have stressed and panicked some examinees on the night before the MBE was administered.” This “glitch,” Albanese concedes, “cannot be ruled out as a contributing factor” to the decline in MBE scores and pass rates.

More important, Albanese offers compelling new evidence that ExamSoft played a major role in depressing July 2014 exam scores. He resists that conclusion, but I think the evidence speaks for itself. Let’s take a look at the new evidence, along with why this still matters.

LSAT Scores and MBE Scores

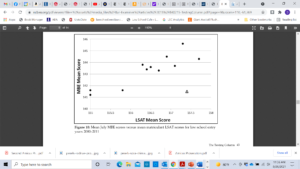

Albanese obtained the national mean LSAT score for law students who entered law school each year from 2000 through 2011. He then plotted those means against the average MBE scores earned by the same students three years later. The graph (Figure 10 on p. 43 of his article) looks like this:

As the black dots show, there is a strong linear relationship between scores on the LSAT and those for the MBE. Entering law school classes with high LSAT scores produce high MBE scores after graduation. For the classes that began law school from 2000 through 2010, the correlation is 0.89–a very high value.

Now look at the triangle toward the lower right-hand side of the graph. That symbol represents the relationship between mean LSAT score and mean MBE score for the class that entered law school in fall 2011 and took the bar exam in July 2014. As Albanese admits, this dot is way off the line: “it shows a mean MBE score that is much lower than that of other points with similar mean LSAT scores.”

Based on the historical relationship between LSAT and MBE scores, Albanese calculates that the Class of 2014 should have achieved a mean MBE score of 144.0. Instead, the mean was just 141.4, producing elevated bar failure rates across the country. As Albanese acknowledges, there was a clear “disruption in the relationship between the matriculant LSAT scores and MBE scores with the July 2014 examination.”

Professors Jerry Organ and Derek Muller made similar points last fall, but they were handicapped by their lack of access to LSAT means. The ABA releases only median scores, and those numbers are harder to compile into the type of persuasive graph that Albanese produced. Organ and Muller made an excellent case with their data–one that NCBE should have heeded–but they couldn’t be as precise as Albanese.

But now we have NCBE’s Director of Testing and Research admitting that “something happened” with the Class of 2014 “that disrupted the previous relationship between MBE scores and LSAT scores.” What could it have been?

Apprehending a Suspect

Albanese suggests a single culprit for the significant disruption shown in his graph: He states that the Law School Admission Council (LSAC) changed the manner in which it reported scores for students who take the LSAT more than once. Starting with the class that entered in fall 2011, Albanese writes, LSAC used the high score for each of those test takers; before then, it used the average scores.

At first blush, this seems like a possible explanation. On average, students who retake the LSAT improve their scores. Counting only high scores for these test takers, therefore, would increase the mean score for the entering class. National averages calculated using high scores for repeaters aren’t directly comparable to those computed with average scores.

But there is a problem with Albanese’s rationale: He is wrong about when LSAC switched its method for calculating national means. That occurred for the class that matriculated in fall 2010, not the one that entered in fall 2011. LSAC’s National Decision Profiles, which report these national means, state that quite clearly.

Albanese’s suspect, in other words, has an alibi. The change in LSAT reporting methods occurred a year earlier; it doesn’t explain the aberrational results on the July 2014 MBE. If we accept LSAT scores as a measure of ability, as NCBE has urged throughout this discussion, then the Class of 2014 should have received higher scores on the MBE. Why was their mean score so much lower than their LSAT test scores predicted?

NCBE has vigorously asserted that the test was not to blame; they prepared, vetted, and scored the July 2014 MBE using the same professional methods employed in the past. I believe them. Neither the test content nor the scoring algorithms are at fault. But we can’t ignore the evidence of Albanese’s graph: something untoward happened to the Class of 2014’s MBE scores.

The Villain

The villain almost certainly is the suspect who appeared at the very beginning of the story: ExamSoft. Anyone who has sat through the bar exam, who has talked to test-takers during those days, or who has watched students struggle to upload a single law school exam knows this.

I still remember the stress of the bar exam, although 35 years have passed. I’m pretty good at legal writing and analysis, but the exam wore me out. Few other experiences have taxed me as much mentally and physically as the bar exam.

For a majority of July 2014 test-takers, the ExamSoft “glitch” imposed hours of stress and sleeplessness in the middle of an already exhausting process. The disruption, moreover, occurred during the one period when examinees could recoup their energy and review material for the next day’s exam. It’s hard for me to imagine that ExamSoft’s failure didn’t reduce test-taker performance.

The numbers back up that claim. As I showed in a previous post, bar passage rates dropped significantly more in states affected directly by the software crash than in other states. The difference was large enough that there is less than a 0.001 probability that it occurred by chance. If we combine that fact with Albanese’s graph, what more evidence do we need?

Aiding and Abetting

ExamSoft was the original culprit, but NCBE aided and abetted the harm. The testing literature is clear that exams can be equated only if both the content and the test conditions are comparable. The testing conditions on July 29-30, 2014, were not the same as in previous years. The test-takers were stressed, overtired, and under-prepared because of ExamSoft’s disruption of the testing procedure.

NCBE was not responsible for the disruption, but it should have refrained from equating results produced under the 2014 conditions with those from previous years. Instead, it should have flagged this issue for state bar examiners and consulted with them about how to use scores that significantly understated the ability of test takers. The information was especially important for states that had not used ExamSoft, but whose examinees suffered repercussions through NCBE’s scaling process.

Given the strong relationship between LSAT scores and MBE performance, NCBE might even have used that correlation to generate a second set of scaled scores correcting for the ExamSoft disruption. States could have chosen which set of scores to use–or could have decided to make a one-time adjustment in the cut score. However states decided to respond, they would have understood the likely effect of the ExamSoft crisis on their examinees.

Instead, we have endured a year of obfuscation–and of blaming the Class of 2014 for being “less able” than previous classes. Albanese’s graph shows conclusively that diminished ability doesn’t explain the abnormal dip in July 2014 MBE scores. Our best predictor of that ability, scores earned on the LSAT, refutes that claim.

Lessons for the Future

It’s time to put the ExamSoft debacle to rest–although I hope we can do so with an even more candid acknowledgement from NCBE that the software crash was the primary culprit in this story. The test-takers deserve that affirmation.

At the same time, we need to reflect on what we can learn from this experience. In particular, why didn’t NCBE take the ExamSoft crash more seriously? Why didn’t NCBE and state bar examiners proactively address the impact of a serious flaw in exam administration? The equating and scaling process is designed to assure that exam takers do not suffer by taking one exam administration rather than another. The July 2014 examinees clearly did suffer by taking the exam during the ExamSoft disruption. Why didn’t NCBE and the bar examiners work to address that imbalance, rather than extend it?

I see three reasons. First, NCBE staff seem removed from the experience of bar exam takers. The psychometricians design and assess tests, but they are not lawyers. The president is a lawyer, but she was admitted through Wisconsin’s diploma privilege. NCBE staff may have tested bar questions and formats, but they lack firsthand knowledge of the test-taking experience. This may have affected their ability to grasp the impact of ExamSoft’s disruption.

Second, NCBE and law schools have competing interests. Law schools have economic and reputational interests in seeing their graduates pass the bar; NCBE has economic and reputational interests in disclaiming any disruption in the testing process. The bar examiners who work with NCBE have their own economic and reputational interests: reducing competition from new lawyers. Self interest is nothing to be ashamed of in a market economy; nor is self interest incompatible with working for the public good.

The problem with the bar exam, however, is that these parties (NCBE and bar examiners on one side, law schools on the other) tend to talk past one another. Rather than gain insights from each other, the parties often communicate after decisions are made. Each seems to believe that it protects the public interest, while the other is driven purely by self interest.

This stand-off hurts law school graduates, who get lost in the middle. NCBE and law schools need to start listening to one another; both sides have valid points to make. The ExamSoft crisis should have prompted immediate conversations between the groups. Law schools knew how the crash had affected their examinees; the cries of distress were loud and clear. NCBE knew, as Albanese’s graph shows, that MBE scores were far below outcomes predicted by the class’s LSAT scores. Discussion might have generated wisdom.

Finally, the ExamSoft debacle demonstrates that we need better coordination–and accountability–in the administration and scoring of bar exams. When law schools questioned the July 2014 results, NCBE’s president disclaimed any responsibility for exam administration. That’s technically true, but exam administration affects equating and scaling. Bar examiners, meanwhile, accepted NCBE’s results without question; they assumed that NCBE had taken all proper factors (including any effect from a flawed administration) into account.

We can’t rewind administration of the July 2014 bar exam; nor can we redo the scoring. But we can create a better system for exam administration going forward, one that includes more input from law schools (who have valid perspectives that NCBE and state bar examiners lack) as well as more coordination between NCBE and bar examiners on administration issues.

Jobs, Bar Exam, ExamSoft, NCBE View Comments (5)ExamSoft Settlement

A federal judge has tentatively approved settlement of consolidated class action lawsuits brought by July 2014 bar examinees against ExamSoft. The lawsuits arose out of the well known difficulties that test-takers experienced when they tried to upload their essay answers through ExamSoft’s software. I have written about this debacle, and its likely impact on bar scores, several times. For the most recent post in the series, see here.

Looking at this settlement, it’s hard to know what the class representatives were thinking. Last summer, examinees paid between $100 and $150 for the privilege of using ExamSoft software. When the uploads failed to work, they were unable to reach ExamSoft’s customer service lines. Many endured hours of anxiety as they tried to upload their exams or contact customer service. The snafu distracted them preparing for the next day’s exam or getting some much needed sleep.

What are the examinees who suffered through this “barmageddon” getting for their troubles? $90 apiece. That’s right, they’re not even getting a full refund on the fees they paid. The class action lawyers, meanwhile, will walk away with up to $600,000 in attorneys’ fees.

I understand that damages for emotional distress aren’t awarded in contract actions. I get that (and hopefully got that right on the MBE). But agreeing to a settlement that awards less than the amount exam takers paid for this shoddy service? ExamSoft clearly failed to perform its side of the bargain; the complaint stated a very straightforward claim for breach of contract. In addition, the plaintiffs invoked federal and state consumer laws that might have awarded other relief.

What were the class representatives thinking? Perhaps they used the lawsuit as a training ground to enter the apparently lucrative field of representing other plaintiffs in class action suits. Now that they know how to collect handsome fees, they’re not worried about the pocket change they paid to ExamSoft.

I believe in class actions–they’re a necessary procedure to enforce some claims, including the ones asserted in this case. But the field now suffers from so much abuse, with attorneys collecting the lions’ share of awards and class members receiving relatively little. It’s no wonder that the public, and some appellate courts, have become so cynical about class actions.

From that perspective, there’s a great irony in this settlement. People who wanted to be lawyers, and who suffered a compensable breach of contract while engaged in that quest, have now been shortchanged by the very professionals they seek to join.

Technology, Bar Exam, ExamSoft No Comments YetExamSoft Update

In a series of posts (here, here, and here) I’ve explained why I believe that ExamSoft’s massive computer glitch lowered performance on the July 2014 Multistate Bar Exam (MBE). I’ve also explained how NCBE’s equating and scaling process amplified the damage to produce a 5-point drop in the national bar passage rate.

We now have a final piece of evidence suggesting that something untoward happened on the July 2014 bar exam: The February 2015 MBE did not produce the same type of score drop. This February’s MBE was harder than any version of the test given over the last four decades; it covered seven subjects instead of six. Confronted with that challenge, the February scores declined somewhat from the previous year’s mark. The mean scaled score on the February 2015 MBE was 136.2, 1.8 points lower than the February 2014 mean scaled score of 138.0.

The contested July 2014 MBE, however, produced a drop of 2.8 points compared to the July 2013 test. That drop was 35.7% larger than the February drop. The July 2014 shift was also larger than any other year-to-year change (positive or negative) recorded during the last ten years. (I treat the February and July exams as separate categories, as NCBE and others do.)

The shift in February 2015 scores, on the other hand, is similar in magnitude to five other changes that occurred during the last decade. Scores dropped, but not nearly as much as in July–and that’s despite taking a harder version of the MBE. Why did the July 2014 examinees perform so poorly?

It can’t be a change in the quality of test takers, as NCBE’s president, Erica Moeser, has suggested in a series of communications to law deans and the profession. The February 2015 examinees started law school at about the same time as the July 2014 ones. As others have shown, law student credentials (as measured by LSAT scores) declined only modestly for students who entered law school in 2011.

We’re left with the conclusion that something very unusual happened in July 2014, and it’s not hard to find that unusual event: a software problem that occupied test-takers’ time, aggravated their stress, and interfered with their sleep.

On its own, my comparison of score drops does not show that the ExamSoft crisis caused the fall in July 2014 test performance. The other evidence I have already discussed is more persuasive. I offer this supplemental analysis for two reasons.

First, I want to forestall arguments that February’s performance proves that the July test-takers must have been less qualified than previous examinees. February’s mean scaled score did drop, compared to the previous February, but the drop was considerably less than the sharp July decline. The latter drop remains the largest score change during the last ten years. It clearly is an outlier that requires more explanation. (And this, of course, is without considering the increased difficulty of the February exam.)

Second, when combined with other evidence about the ExamSoft debacle, this comparison adds to the concerns. Why did scores fall so precipitously in July 2014? The answer seems to be ExamSoft, and we owe that answer to test-takers who failed the July 2014 bar exam.

One final note: Although I remain very concerned about both the handling of the ExamSoft problem and the equating of the new MBE to the old one, I am equally concerned about law schools that admit students who will struggle to pass a fairly administered bar exam. NCBE, state bar examiners, and law schools together stand as gatekeepers to the profession and we all owe a duty of fairness to those who seek to join the profession. More about that soon.

Technology, Bar Exam, ExamSoft, NCBE No Comments YetExamSoft and NCBE

I recently found a letter that Erica Moeser, President of the National Conference of Bar Examiners (NCBE) wrote to law school deans in mid-December. The letter responds to a formal request, signed by 79 law school deans, that NCBE “facilitate a thorough investigation of the administration and scoring of the July 2014 bar exam.” That exam suffered from the notorious ExamSoft debacle.

Moeser’s letter makes an interesting distinction. She assures the deans that NCBE has “reviewed and re-reviewed” its scoring, equating, and scaling of the July 2014 MBE. Those reviews, Moeser attests, revealed no flaw in NCBE’s process. She then adds that, to the extent the deans are concerned about “administration” of the exam, they should “note that NCBE does not administer the examination; jurisdictions do.”

Moeser doesn’t mention ExamSoft by name, but her message seems clear: If ExamSoft’s massive failure affected examinees’ performance, that’s not our problem. We take the bubble sheets as they come to us, grade them, equate the scores, scale those scores, and return the numbers to the states. It’s all the same to NCBE if examinees miss points because they failed to study, law schools taught them poorly, or they were groggy and stressed from struggling to upload their essay exams. We only score exams, we don’t administer them.

But is the line between administration and scoring so clear?

The Purpose of Equating

In an earlier post, I described the process of equating and scaling that NCBE uses to produce final MBE scores. The elaborate transformation of raw scores has one purpose: “to ensure consistency and fairness across the different MBE forms given on different test dates.”

NCBE thinks of this consistency with respect to its own test questions; it wants to ensure that some test-takers aren’t burdened with an overly difficult set of questions–or conversely, that other examinees don’t benefit from unduly easy questions. But substantial changes in exam conditions, like the ExamSoft crash, can also make an exam more difficult. If they do, NCBE’s equating and scaling process actually amplifies that unfairness.

To remain faithful to its mission, it seems that NCBE should at least explore the possible effects of major blunders in exam administration. This is especially true when a problem affects multiple jurisdictions, rather than a single state. If an incident affects a single jurisdiction, the examining authorities in that state can decide whether to adjust scores for that exam. When the problem is more diffuse, as with the ExamSoft failure, individual states may not have the information necessary to assess the extent of the impact. That’s an even greater concern when nationwide equating will spread the problem to states that did not even contract with ExamSoft.

What Should NCBE Have Done?

NCBE did not cause ExamSoft’s upload problems, but it almost certainly knew about them. Experts in exam scoring also understand that defects in exam administration can interfere with performance. With knowledge of the ExamSoft problem, NCBE had the ability to examine raw scores for the extent of the ExamSoft effect. Exploration would have been most effective with cooperation from ExamSoft itself, revealing which states suffered major upload problems and which ones experienced more minor interference. But even without that information, NCBE could have explored the raw scores for indications of whether test takers were “less able” in ExamSoft states.

If NCBE had found a problem, there would have been time to consult with bar examiners about possible solutions. At the very least, NCBE probably should have adjusted its scaling to reflect the fact that some of the decrease in raw scores stemmed from the software crash rather than from other changes in test-taker ability. With enough data, NCBE might have been able to quantify those effects fairly precisely.

Maybe NCBE did, in fact, do those things. Its public pronouncements, however, have not suggested any such process. On the contrary, Moeser seems to studiously avoid mentioning ExamSoft. This reveals an even deeper problem: we have a high-stakes exam for which responsibility is badly fragmented.

Who Do You Call?

Imagine yourself as a test-taker on July 29, 2014. You’ve been trying for several hours to upload your essay exam, without success. You’ve tried calling ExamSoft’s customer service line, but can’t get through. You’re worried that you’ll fail the exam if you don’t upload the essays on time, and you’re also worried that you won’t be sufficiently rested for the next day’s MBE. Who do you call?

You can’t call the state bar examiners; they don’t have an after-hours call line. If they did, they probably would reassure you on the first question, telling you that they would extend the deadline for submitting essay answers. (This is, in fact, what many affected states did.) But they wouldn’t have much to offer on the second question, about getting back on track for the next day’s MBE. Some state examiners don’t fully understand NCBE’s equating and scaling process; those examiners might even erroneously tell you “not to worry because everyone is in the same boat.”

NCBE wouldn’t be any more help. They, as Moeser pointed out, don’t actually administer exams; they just create and score them.

Many distressed examinees called law school staff members who had helped them prepare for the bar. Those staff members, in turn, called their deans–who contacted NCBE and state bar examiners. As Moeser’s letters indicate, however, bar examiners view deans with some suspicion. The deans, they believe, are too quick to advocate for their graduates and too worried about their own bar pass rates.

As NCBE and bar examiners refused to respond, or shifted responsibility to the other party, we reached a stand-off: no one was willing to take responsibility for flaws in a very high-stakes test administered to more than 50,000 examinees. That is a failure as great as the ExamSoft crash itself.

Technology, Bar Exam, ExamSoft, NCBE No Comments YetExamSoft: By the Numbers

Earlier this week I explained why the ExamSoft fiasco could have lowered bar passage rates in most states, including some states that did not use the software. But did it happen that way? Only ExamSoft and the National Conference of Bar Examiners have the data that will tell us for sure. But here’s a strong piece of supporting evidence:

Among states that did not experience the ExamSoft crisis, the average bar passage rate for first-time takers from ABA-accredited law schools fell from 81% in July 2013 to 78% in July 2014. That’s a drop of 3 percentage points.

Among the states that were exposed to the ExamSoft problems, the average bar passage rate for the same group fell from 83% in July 2013 to 78% in July 2014. That’s a 5 point drop, two percentage points more than the drop in the “unaffected” states.

Derek Muller did the important work of distinguishing these two groups of states. Like him, I count a state as an “ExamSoft” one if it used that software company and its exam takers wrote their essays on July 29 (the day of the upload crisis). There are 40 states in that group. The unaffected states are the other 10 plus the District of Columbia; these jurisdictions either did not contract with ExamSoft or their examinees wrote essays on a different day.

The comparison between these two groups is powerful. What, other than the ExamSoft debacle could account for the difference between the two? A 2-point difference is not one that occurs by chance in a population this size. I checked and the probability of this happening by chance (that is, by separating the states randomly into two groups of this size) is so small that it registered as 0.00 on my probability calculator.

It’s also hard to imagine another factor that would explain the difference. What do Arizona, DC, Kentucky, Louisiana, Maine, Massachusetts, Nebraska, New Jersey, Virginia, Wisconsin, and Wyoming have in common other than that their test takers were not directly affected by ExamSoft’s malfunction? Large states and small states; Eastern states and Western states; red states and blue states.

Of course, as I explained in my previous post, examinees in 10 of those 11 jurisdictions ultimately suffered from the glitch; that effect came through the equating and scaling process. The only jurisdiction that escaped completely was Louisiana, which used neither ExamSoft nor the MBE. That state, by the way, enjoyed a large increase in its bar passage rate between July 2013 and July 2014.

This is scary on at least four levels:

1. The ExamSoft breakdown affected performance sufficiently that states using the software suffered an average drop of 2 percentage points in bar passage.

2. The equating and scaling process amplified the drop in raw scores. These processes dropped pass rates as much as three more percentage points across the nation. In states where raw scores were affected, pass rates fell an average of 5 percentage points. In other states, the pass rate fell an average of 3 percentage points. (I say “as much as” here because it is possible that other factors account for some of this drop; my comparison can’t control for that possibility. It seems clear, however, that equating and scaling amplified the raw-score drop and accounted for some–perhaps all–of this drop.)

3. Hundreds of test takers–probably more than 1,500 nationwide–failed the bar exam when they should have passed.

4. ExamSoft and NCBE have been completely unresponsive to this problem, despite the fact that these data have been available to them.

One final note: the comparisons in this post are a conservative test of the ExamSoft hypothesis, because I created a simple dichotomy between states exposed directly to the upload failure and those with no direct exposure. It is quite likely that states in the first group differed in the extent to which their examinees suffered. In some states, most test takers may have successfully uploaded their essays on the first try; in others, a large percentage of examinees may have struggled for hours. Those differences could account for variations within the “ExamSoft” states.

ExamSoft and NCBE could make those more nuanced distinctions. From the available data, however, there seems little doubt that the ExamSoft wreck seriously affected results of the July 2014 bar exam.

* I am grateful to Amy Otto, a former student who is wise in the way of statistics and who helped me think through these analyses.

Data, Bar Exam, ExamSoft No Comments YetExamSoft After All?

Why did so many people fail the July 2014 bar exam? Among graduates of ABA-accredited law schools who took the exam for the first time last summer, just 78% passed. A year earlier, in July 2013, 82% passed. What explains a four-point drop in a single year?

The ExamSoft debacle looked like an obvious culprit. Time wasted, increased anxiety, and loss of sleep could have affected the performance of some test takers. For those examinees, even a few points might have spelled the difference between success and failure.

Thoughtful analyses, however, pointed out that pass rates fell even in states that did not use ExamSoft. What, then, explains such a large performance drop across so many states? After looking closely at the way in which NCBE and states grade the bar exam, I’ve concluded that ExamSoft probably was the major culprit. Let me explain why–including the impact on test takers in states that didn’t use ExamSoft–by walking you through the process step by step. Here’s how it could have happened:

Tuesday, July 29, 2014

Bar exam takers in about forty states finished the essay portion of the exam and attempted to upload their answers through ExamSoft. But for some number of them, the essays wouldn’t upload. We don’t know the exact number of affected exam takers, but it seems to have been quite large. ExamSoft admitted to a “six-hour backlog” and at least sixteen states ultimately extended their submission deadlines.

Meanwhile, these exam takers were trying to upload their exams, calling customer service, and worrying about the issue (wouldn’t you, if failure to upload meant bar failure?) instead of eating dinner, reviewing their notes for the next day’s MBE, and getting to sleep.

Wednesday, July 30, 2014

Test takers in every state but Louisiana took the multiple choice MBE. In some states, no one had been affected by the upload problem. In others, lots of people were. They were tired, stressed, and had spent less time reviewing. Let’s suppose that, due to these issues, the ExamSoft victims performed somewhat less well than they would have performed under normal conditions. Instead of answering 129 questions correctly (a typical raw score for the July MBE), they answered just 125 questions correctly.

August 2014: Equating

The National Conference of Bar Examiners (NCBE) received all of the MBE answers and began to process them. The raw scores for ExamSoft victims were lower than those for typical July examinees, and those scores affected the mean for the entire pool. Most important, mean scores were lower for both the “control questions” and other questions. “Control questions” is my own shorthand for a key group of questions; these are questions that have appeared on previous bar exams, as well as the most current one. By analyzing scores for the control questions (both past and present) and new questions, NCBE can tell whether one group of exam takers is more or less able than an earlier group. For a more detailed explanation of the process, see this article.

These control questions serve an important function; they allow NCBE to “equate” exam difficulty over time. What if the Evidence questions one year are harder than those for the previous year? Pass rates would fall because of an unfairly hard exam, not because of any difference in the exam takers’ ability. By analyzing responses to the control questions (compared to previous years) and the new questions, NCBE can detect changes in exam difficulty and adjust raw scores to account for them.

Conversely, these analyses can confirm that lower scores on an exam stem from the examinees’ lower ability rather than any change in the exam difficulty. Weak performance on control questions will signal that the examinees are “less able” than previous groups of examinees.

But here’s the rub: NCBE can’t tell from this general analysis why a group of examinees is less able than an earlier group. Most of the time, we would assume that “less able” means less innately talented, less well prepared, or less motivated. But “less able” can also mean distracted, stressed, and tired because of a massive software crash the night before. Anything that affects performance of a large number of test takers, even if the individual impact is relatively small, will make the group appear “less able” in the equating process that NCBE performs.

That’s step one of my theory: struggling with ExamSoft made a large number of July 2014 examinees perform somewhat below their real ability level. Those lower scores, in turn, lowered the overall performance level of the group–especially when compared, through the control questions, to earlier groups of examinees. If thousands of examinees went out partying the night before the July 2014 MBE, no one would be surprised if the group as a whole produced a lower mean score. That’s what happened here–except that the examinees were frantically trying to upload essay questions rather than partying.

August 2014: Scaling

Once NCBE determines the ability level of a group of examinees, as well as the relative difficulty of the test, it adjusts the raw scores to account for these factors. The adjustment process is called “scaling” and it consists of adding points to the examinees’ raw scores. In a year with an easy test or “less able” examinees, the scaling process adds just a few points to each examinee’s raw score. Groups who faced a harder test or were “more able,” get more points. [Note that the process is a little more complicated that this; each examinee doesn’t get exactly the same point addition. The general process, however, works in this way–and affects the score of every single examinee. See this article for more.]

This is the point at which the ExamSoft crisis started to affect all examinees. NCBE doesn’t scale scores just for test takers who seem less able than others; it scales scores for the entire group. The mean scaled score for the July 2014 MBE was 141.5, almost three points lower than the mean scaled score in July 2013 (which was 144.3). This was also the lowest scaled score in ten years. See this report (p. 35) for a table reporting those scores.

It’s essential to remember that the scaling process affects every examinee in every state that uses the MBE. Test takers in states unaffected by ExamSoft got raw scores that reflected their ability, but they got a smaller scaling increment than they would have received without ExamSoft depressing outcomes in other states. The direct ExamSoft victims, of course, suffered a double whammy: they obtained a lower raw score than they might have otherwise achieved, plus a lower scaling boost to that score.

Fall 2014: Essay Scoring

After NCBE finished calculating and scaling MBE scores, the action moved to the states. States (except for Louisiana, which doesn’t use the MBE), incorporated the artificially depressed MBE scores into their bar score formulas. Remember that those MBE scores were lower for every exam taker than they would have been without the ExamSoft effect.

The damage, though, didn’t stop there. Many (perhaps most) states scale the raw scores from their essay exams to MBE scores. Here’s an article that explains the process in fairly simple terms, and I’ll attempt to sum it up here.

Scaling takes raw essay scores and arranges them on a skeleton provided by that state’s scaled MBE results. When the process is done, the mean essay score will be the same as the mean scaled MBE score for that state. The standard deviations for both will also be the same.

What does that mean in everyday English? It means that your state’s scaled MBE scores determine the grading curve for the essays. If test takers in your state bombed the MBE, they will all get lower scores on the essays as well. If they aced the MBE, they’ll get higher essay scores.

Note that this scaling process is a group-wide one, not an individual one. An individual who bombed the MBE won’t necessarily flunk the essays as well. Scaling uses indicators of group performance to adjust essay scores for the group as a whole. The exam taker who wrote the best set of essays in a state will still get the highest essay score in that state; her scaled score just won’t be as high as it would have been if her fellow test takers had done better on the MBE.

Scaling raw essay scores, like scaling the raw MBE scores, produces good results in most years. If one year’s graders have a snit and give everyone low scores on the essay part of the exam, the scaling process will say, “wait a minute, the MBE scores show that this group of test takers is just as good as last year’s. We need to pull up the essay scores to mirror performance on the MBE.” Conversely, if the graders are too generous (or the essay questions were too easy), the scaling process will say “Uh-oh. The MBE scores show us that this year’s group is no better than last year’s. We need to pull down your scores to keep them in line with what previous graders have done.”

The scaled MBE scores in July 2014 told the states: “Your test takers weren’t as good this year as last year. Pull down those essay scores.” Once again, this scaling process affected everyone who took the bar exam in a state that uses the MBE and scales essays to the MBE. I don’t know how many states are in the latter camp, but NCBE strongly encourages states to scale their essay scores.

Fall 2014: MPT Scoring

You guessed it. States also scale MPT scores to the MBE. Once again, MBE scores told them that this group of exam takers was “less able” than earlier groups so they should scale down MPT scores. That would have happened in every state that uses both the MBE and MPT, and scales the latter scores to the former.

Conclusion

So there you have it: this is how poor performance by ExamSoft victims could have depressed scores for exam takers nationwide. For every exam taker (except those in Louisiana) there was at least a single hit: a lower scaled MBE score. For many exam takers there were three hits: lower scaled MBE score, lower scaled essay score, and lower scaled MPT score. For some direct victims of the ExamSoft crisis, there was yet a fourth hit: a lower raw score on the MBE. But, as I hope I’ve shown here, those raw scores were also pebbles that set off much larger ripples in the pond of bar results. If you throw enough pebbles into a pond all at once, you trigger a pretty big wave.

Erica Moeser, the NCBE President, has defended the July 2014 exam results on the ground that test takers were “less able” than earlier groups of test takers. She’s correct in the limited sense that the national group of test takers performed less well, on average, on the MBE than the national group did in previous years. But, unless NCBE has done more sophisticated analyses of state-by-state raw scores, that doesn’t tell us why the exam takers performed less “ably.”

Law deans like Brooklyn’s Nick Allard are clearly right that we need a more thorough investigation of the July 2014 bar results. It’s too late to make whole the 2,300 or so test takers who may have unfairly failed the exam. They’ve already grappled with a profound sense of failure, lost jobs, studied for and taken the February exam, or given up on a career practicing law. There may, though, be some way to offer them redress–at least the knowledge that they were subject to an unfair process. We need to unravel the mystery of July 2014, both to make any possible amends and to protect law graduates in the future.

I plan to post some more thoughts on this, including some suggestions about how NCBE (or a neutral outsider) could further examine the July 2014 results. Meanwhile, please let me know if you have thoughts on my analysis. I’m not a bar exam insider, although I studied some of these issues once before. This is complicated stuff, and I welcome any comments or corrections.

Updated on September 21, 2015, to correct reported pass rates.

Data, Bar Exam, ExamSoft, July 2014 View Comments (3)About Law School Cafe

Cafe Manager & Co-Moderator

Deborah J. Merritt

Cafe Designer & Co-Moderator

Kyle McEntee

Law School Cafe is a resource for anyone interested in changes in legal education and the legal profession.

Law School Cafe is a resource for anyone interested in changes in legal education and the legal profession.

Around the Cafe

Subscribe

Categories

Recent Comments

- websites on Experiential Education

- Law School Cafe on Scholarship Advice

- COVID Should Prompt Us To Get Rid Of New York’s Bar Exam Forever - The Lawyers Post on ExamSoft: New Evidence from NCBE

- Targeted Legal Staffing Soluti on COVID-19 and the Bar Exam

- Advocates Denver on Women Law Students: Still Not Equal

Recent Posts

- Experiential Education

- The Bot Takes a Bow

- Fundamental Legal Concepts and Principles

- Lay Down the Law

- The Bot Updates the Bar Exam

Monthly Archives

Participate

Have something you think our audience would like to hear about? Interested in writing one or more guest posts? Send an email to the cafe manager at merritt52@gmail.com. We are interested in publishing posts from practitioners, students, faculty, and industry professionals.